Ethercat random jitter fix

- grandixximo

-

Topic Author

Topic Author

- Away

- Elite Member

-

Less

More

- Posts: 247

- Thank you received: 330

18 Apr 2026 02:03 - 18 Apr 2026 02:06 #345671

by grandixximo

Did it on pagagno-source request

This is just for testing, I pretty much knew it was a dead end when I started on this path, but it is just so we are all on the same page, I need to bring receipts, I won't simply hand wave his concerns, he wanted 1.5.2 on Trixie so that he will be convinced that is not the issue, and I gave him that. I don't want to come back to this point over and over again as we proceed with more testing, once it is proven that 1.5.2 does not solve the issue, we can move on to the next thing. Just trying to respect other people's perspective, call it an unnecessary waste of time if you want, I can see where you are coming from, but I feel the best approach is moving systematically one step at the time, clearing up all concerns, testing thoroughly, find agreement, move to the next thing.

1.6 and 1.7 present the same OP time delays in my testing.

Replied by grandixximo on topic Ethercat random jitter fix

For somebody trying hard to follow along from the sidelines, can you guys outline the objective for rolling back to 1.52 here?

Has anybody looked at the Igh Ethercat master 1.6 or even 1.7 which was released on gitlab 3 weeks ago (but not in their repositories) to see if it has these delays outside of linuxcnc.

It seems to me it would be unlikely that iGh master 1.6 or 1.7 are problematic as people would be complaining at iGh so to me, the effort should be in understanding what has changed that linuxcnc-ethercat is not liking. Rolling back to a very old version does not seem a progressive step following the synchronisation enhancement.

Did it on pagagno-source request

Why don't we try installing Ethercat 1.5.2 on Trixie, so as to understand if the problem is on the kernel or Ethercat side.

This is just for testing, I pretty much knew it was a dead end when I started on this path, but it is just so we are all on the same page, I need to bring receipts, I won't simply hand wave his concerns, he wanted 1.5.2 on Trixie so that he will be convinced that is not the issue, and I gave him that. I don't want to come back to this point over and over again as we proceed with more testing, once it is proven that 1.5.2 does not solve the issue, we can move on to the next thing. Just trying to respect other people's perspective, call it an unnecessary waste of time if you want, I can see where you are coming from, but I feel the best approach is moving systematically one step at the time, clearing up all concerns, testing thoroughly, find agreement, move to the next thing.

1.6 and 1.7 present the same OP time delays in my testing.

Last edit: 18 Apr 2026 02:06 by grandixximo.

The following user(s) said Thank You: rodw

Please Log in or Create an account to join the conversation.

- rodw

-

- Offline

- Platinum Member

-

Less

More

- Posts: 11850

- Thank you received: 4015

18 Apr 2026 03:10 #345672

by rodw

Replied by rodw on topic Ethercat random jitter fix

Thanks for clarifying. I guess if 1.5.2 does not solve the issue, the next question is has linuxcnc-ethercat changed since Debian 10? I suspect not but can you review and confirm? If nothing has changed, its a Debian kernel issue

People forget that modern kernels post 5.9 and up (which is well after Debian 10) are enormously complex and the hardware developers have been adding features for years (mostly power saving) that the linux kernel unfortunately now supports. So there are a lot of tuning steps now. Perhaps there are some issues we have not covered in our known NIC tunings.

I've shared a 6.3 kernel I built in the past on Discord which many people had good results with. Perhaps it will help. It will be a few days before I can build a later kernel for Trixie.

People forget that modern kernels post 5.9 and up (which is well after Debian 10) are enormously complex and the hardware developers have been adding features for years (mostly power saving) that the linux kernel unfortunately now supports. So there are a lot of tuning steps now. Perhaps there are some issues we have not covered in our known NIC tunings.

I've shared a 6.3 kernel I built in the past on Discord which many people had good results with. Perhaps it will help. It will be a few days before I can build a later kernel for Trixie.

Please Log in or Create an account to join the conversation.

- grandixximo

-

Topic Author

Topic Author

- Away

- Elite Member

-

Less

More

- Posts: 247

- Thank you received: 330

18 Apr 2026 05:00 - 18 Apr 2026 05:40 #345675

by grandixximo

I have done testing with the above, this is Debian 13 everything installed from repos.

Generic drive no ec_igc

20 devices

9 servos DC

1 VFD DC

10 I/O not DC

the 10 I/O always OP instantly

I've tried to keep an eye onMy start up quite randomly, within a range of + or - 3 million, and does not always perfectly correlate with how quickly the servos OP, but they seem more often than not to OP quickly when this start as a small value, but it does not correlate perfectly.

with refClockSyncCycles="1"

I get 40s to all OP, so about 4s per device, but some take 2s some 6s, not a constant 4s.

with refClockSyncCycles="-1"

I get 12s to all OP, so about 1.2s per device, again not constant, some quick some slow, but overall much faster.

I have a 1khz servo loop 1000000ns

@Hakan when you say 2khz you mean 500000ns correct?

anyway that is with Scott's code, nothing of mine, I will do further testing with your referenced commit, and then test with my changes, and different kernels see if linuxcnc-ethercat -1 and +1 are the bigger factors here or not.

Replied by grandixximo on topic Ethercat random jitter fix

uname -r 6.12.74+deb13+1-rt-amd64

dpkg -l | grep linuxcnc-uspace

ii linuxcnc-uspace 1:2.9.4-2 amd64 motion controller for CNC machines and robots

dpkg -l | grep ethercat

ii ethercat-dkms 1.6.9.gb709e58-1+28.1 all IgH EtherCAT Master

ii ethercat-master 1.6.9.gb709e58-1+28.1 amd64 IgH EtherCAT Master

ii libethercat 1.6.9.gb709e58-1+28.1 amd64 IgH EtherCAT Master

ii linuxcnc-ethercat 1.40.0.g8a607c0-0 amd64 LinuxCNC EtherCAT HAL driverI have done testing with the above, this is Debian 13 everything installed from repos.

Generic drive no ec_igc

20 devices

9 servos DC

1 VFD DC

10 I/O not DC

the 10 I/O always OP instantly

I've tried to keep an eye on

watch -n0 "ethercat reg read -p2 -tsm32 0x92c"with refClockSyncCycles="1"

I get 40s to all OP, so about 4s per device, but some take 2s some 6s, not a constant 4s.

with refClockSyncCycles="-1"

I get 12s to all OP, so about 1.2s per device, again not constant, some quick some slow, but overall much faster.

I have a 1khz servo loop 1000000ns

@Hakan when you say 2khz you mean 500000ns correct?

anyway that is with Scott's code, nothing of mine, I will do further testing with your referenced commit, and then test with my changes, and different kernels see if linuxcnc-ethercat -1 and +1 are the bigger factors here or not.

Last edit: 18 Apr 2026 05:40 by grandixximo.

Please Log in or Create an account to join the conversation.

- Hakan

- Away

- Platinum Member

-

Less

More

- Posts: 1312

- Thank you received: 449

18 Apr 2026 05:56 #345677

by Hakan

Replied by Hakan on topic Ethercat random jitter fix

The code that handles the initial synchronization in the EtherCAT master is 16 years old, except for a variable name change 8 years ago

gitlab.com/etherlab.org/ethercat/-/blame...e=heads&page=2#L1480

There are people with this "Did not sync after 5000 ms." problem but no real solution found.

I am sure the x092c register shows the symptoms of the problem.

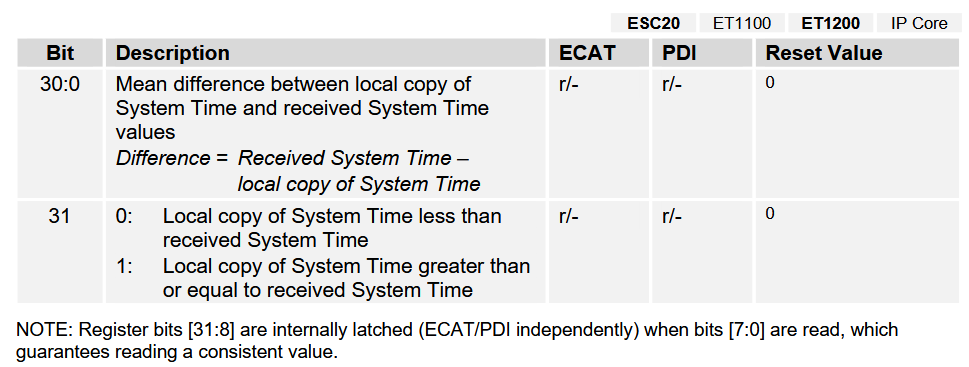

System Time Difference

This have me believing that either

- the slaves' clocks are drifting badly,

- the initial time the slaves get from lcec is not a good choice,

- or both.

Watching the 0x92c register during start, I see often, not always, start at 1000000 ns which is 1 ms.

This is with 1 ms linuxcnc servo loop time. When I go down to 0.5 ms servo loop time, 0x92c often starts at 500000 ns,

so there is a possible a correlation.

This initial 1 or 0.5 ms value in 0x92c happens when I start linuxcnc directly and when I let it sit over night.

This points to that it isn't a slave clock issue, but rather the initial time from lcec that is the problem.

gitlab.com/etherlab.org/ethercat/-/blame...e=heads&page=2#L1480

There are people with this "Did not sync after 5000 ms." problem but no real solution found.

I am sure the x092c register shows the symptoms of the problem.

System Time Difference

This have me believing that either

- the slaves' clocks are drifting badly,

- the initial time the slaves get from lcec is not a good choice,

- or both.

Watching the 0x92c register during start, I see often, not always, start at 1000000 ns which is 1 ms.

This is with 1 ms linuxcnc servo loop time. When I go down to 0.5 ms servo loop time, 0x92c often starts at 500000 ns,

so there is a possible a correlation.

This initial 1 or 0.5 ms value in 0x92c happens when I start linuxcnc directly and when I let it sit over night.

This points to that it isn't a slave clock issue, but rather the initial time from lcec that is the problem.

Attachments:

Please Log in or Create an account to join the conversation.

Time to create page: 0.171 seconds